A few months ago, Nicolai Kraus posted an interesting and surprising result: the truncation map |_| : ℕ → ‖ℕ‖ is nearly invertible. This post attempts to explain why “nearly invertible” is a misnomer.

I, like many others, was very surprised by Nicolai’s result. I doubted the result, tried to push the example through using setoids (the clunky younger sibling of higher inductive types), succeeded, and noted that the key step was, unsurprisingly, that the notion of “respecting an equivalence relation” for a dependent function involved transporting across an appropriate isomorphism of the target types. I accepted it, and added it to my toolbox of counterexamples to the idea that truncation hides information. It was surprising, but not particularly troubling; my toolbox had already contained the example that, for any fixed of any type,

is contractible, even though

might not be; hence erasure of contractible types results in full proof irrelevance, contradicting univalence. More precisely, if inhabitants of contractible types are judgmentally equal, then we can apply the second projection function to the judgmental equality

(x; p) ≡ (x; refl) and obtain p ≡ refl for any proof p : x = x.

Others had more extreme reactions. This post made some people deeply uncomfortable. Mike Shulman became suspicious of judgmental equality, proposing that β-reduction be only propositional. When I brought this example up in my reading group last week, David Spivak called it a “hemorrhaging wound” in our understanding of homotopy type theory; this example deeply violated his homotopical intuition, and really required a better explanation.

What follows is my attempt to condense a four hour discussion with David on this topic. After much head-scratching, some Coq formalization, and a decent amount of back-and-forth, we believe that we pinpointed the confusion to the notion of composition, and didn’t see any specific problems with judgmental equality or β-reduction.

To elucidate the underlying issues and confusion, I will present a variant of Nicolai’s example with much simpler types. Rather than using the truncation of the natural numbers ‖ℕ‖, I will use the truncation of the booleans ‖Bool‖, which is just the interval. All code in this article is available on gist.

We construct a function out of the interval which sends zero to true and one to false, transporting across the negb (boolean negation) equivalence:

Definition Q : interval → Type := interval_rectnd Type Bool Bool (path_universe negb). Definition myst (x : interval) : Q x := interval_rect Q true false ....

The ... in the definition of myst is an uninteresting path involving univalence, whose details are available in the corresponding code.

The corresponding Agda code would look something like

Q : interval → Type Q zero = Bool Q one = Bool Q seg = ua ¬_ myst : (x : interval) → Q x myst zero = true myst one = false myst seg = ...

We can now factor the identity map on Bool through this function and the inclusion i:

Definition i (x : Bool) : interval := if x then zero else one. Definition id_factored_true : myst (i true) = true := idpath. Definition id_factored_false : myst (i false) = false := idpath.

(Note: If we had used ‖Bool‖ rather than the interval, we would have had a slightly more complicated definition, but would have had judgmental factorization on any variable of type Bool, not just true and false.)

Nicolai expounds a lot more on the generality of this trick, and how it’s surprising. My discussion with David took a different route, which I follow here. As a type theorist, I think in s, in dependent functions; Nicolai’s trick is surprising, and tells me that my intuitions about functions don’t carry over well into type theory, but nothing more. As a category theorist, David thinks in morphisms of types; Nicolai’s trick is deeply disturbing, because the truncation morphism is fundamentally not invertible, and if it’s invertible (in any sense of the word), that’s a big problem for homotopy type theory.

Let’s look more closely at the interface of these two pictures. It is a theorem of type theory (with functional extensionality) that there is an equivalence between dependent functions and sections of the first projection. The following two types are equivalent, for all and all

:

So what does myst look like on the fibration side of the picture?

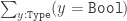

We have the following pullback square, using 2 for Bool and I for the interval:

The composition myst_sig_i ≡ myst_sig ∘ i induces the section myst_pi_i corresponding to the β-reduced composite myst ∘ i, which Nicolai called id-factored. (This is slightly disingenuous, because here (as in Nicolai’s post), ∘ refers to the dependent generalization of composition.) In Coq, we can define the following:

Definition myst_sig : interval → { x : interval & Q x }

:= λ x ⇒ (x; myst x).

Definition myst_sig_i : Bool → { x : interval & Q x }

:= λ b ⇒ myst_sig (i b).

Definition myst_pi_i : Bool → { x : Bool & Q (i x) }

:= λ b ⇒ (b; myst (i b)).

David confronted me with the following troubling facts: on the fibrational, as sections of

side of the picture, we have

myst_sig_i true = myst_sig_i false, but myst_pi_i true ≠ myst_pi_i false. Furthermore, we can prove both the former equation and the latter equation in Coq! But, on the other side of the picture, the dependent function side, we want to identify these functions, not just propositionally (which would be bad enough), but judgementally!

So what went wrong? (Think about it before scrolling.)

Those of you who were checking types carefully probably noticed that myst_sig_i and myst_pi_i have different codomains.

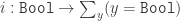

The reason they have different codomains is that we constructed myst_sig_i by composing morphisms in the fibrational picture, but we constructed myst_pi_i by composing functions on the dependent function side of the picture. Dependent function composition is not morphism composition, and involves the extra step of taking the morphism induced by the pullback; if we want to recover morphism composition, we must further compose with the top morphism in the pullback diagram:

We can also see this by shoving dependent function composition across the –

equivalence, which I do here in Coq.

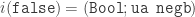

We have explained what the mistake was, but not why we made it in the first place. David came up with this diagram, which does not commute, expressing the mistake:

Type theorists typically think of dependent functions as a generalization of non-dependent functions. In sufficiently recent versions of Coq, → is literally defined as a type over a constant family. Category theorists think of

types as a special case of morphisms with extra data; they are sections of the first projection of a

. Non-dependent functions are (isomorphic to) morphisms in the category of types. Composition of non-dependent functions is composition of morphisms. But there is another embedding: all functions (dependent and non-dependent) can be realized as sections of first projections. And this embedding does not commute with the isomorphism between non-dependent functions and morphisms in the category of types. Saying, the identity (judgmentally) factors through dependent function composition with a particular non-dependent function, is very, very different from saying that the identity morphism factors through the corresponding morphism in the category of types.

So the issue, we think, is not with judgmental equality nor judgmental β-reduction. Rather, the issue is with confusing dependent function composition with morphism composition in the category of types, or, more generally, in confusing dependent functions with morphisms.

And as for the fact that this means that truncation doesn’t hide information, I say that we have much simpler examples to demonstrate this. When I proposed equality reflection for types with decidable equality, Nicolai presented me with the fact that is contractible, even when

is not an hSet, which he discovered from Peter LeFanu Lumsdaine. Because we can take the second projection, we can obtain non-equal terms from equal equal terms. I would argue that the solution to this is not to reduce the judgmental equalities we have in our type-theory, but instead, as Vladimir Voevodsky proposes, make judgmental equalities a first class object of our proof assistant, so that we have both a reflected, non-fibrant, extensional, judgmental equality type a la NuPrl, and a fibrant, intensional, propositional equality type a la Coq and Agda. (I believe Dan Grayson has implemented a toy proof checker with both of these equality types.) I think that the question of what can be done when you hypothesize judgmental equalities is under-studied in homotopy type theory, and especially under-formalized. In particular, I’d like to see an answer to the question of which propositional equalities can safely be made judgmental, without compromising univalence.

![$$\xymatrix{ \sum\limits_{x:2} Q(i(x)) \pullbackarrow[ddr] \ar[r] \ar[dd]^-{\texttt{pr}_1} & \sum\limits_{x : I} Q(x) \ar[dd]^-{\texttt{pr}_1} \\ \\ 2 \ar@/^/[uu]^-{\texttt{myst\_pi\_i}} \ar[r]_i & I \ar@/^/[uu]^-{\texttt{myst\_sig}} }$$](https://homotopytypetheory.org/wp-content/uploads/2014/02/myst_pi_fibration_diagram.png?h=175&w=640)

![$$\xymatrix{ B \ar[rr]_-g && \sum\limits_{x:B} Q(x)\ar@/_/[ll]_-{\texttt{pr}_1} \\ A \ar[rr]_-f && B \\ A \ar[rr]_-{\text{``$g\circ f$''}} && \sum\limits_{x:A}Q(f(x)) \ar@/_/[ll]_-{\texttt{pr}_1} \ar[rr] && \sum\limits_{y:B} Q(y) }$$](https://homotopytypetheory.org/wp-content/uploads/2014/02/composition_diagram.png?h=200&w=640)

![$$\xymatrix{ \text{sections of fibrations} \ar@{^{(}->}[d] \ar@{}[drr]|-{\text{\color{red}\huge\xmark}} && \text{functions}\ar[ll] \ar@{}[d]|{\rotatebox{90}{$\subseteq$}} \\ \text{morphisms of types} \ar@/^/[rr] \ar@{}[rr]|-{\cong} && \text{non-dependent functions} \ar@/^/[ll] }$$](https://homotopytypetheory.org/wp-content/uploads/2014/02/no_commute.png?h=95&w=640)

… guessing off the top of my head (so I may be wrong, as often enough… ), if one can hypothesize judgmental equalities, then one can talk about reduced suspensions/joins/pushouts, and talk directly about equivariance puzzles, and strictly equational theories… I would guess there should be really-and-truely no problem with defining simplicial types… it might be nice; but I’ve had such a fun time just trying to figure out how to do without all of those, too!

For what it’s worth, Nicolai’s trick made me more suspicious of judgmental equalities not because it violated my homotopical/categorical intuition. In fact, it fit perfectly with my intuition, once I compiled it out in categorical language; I certainly wouldn’t call it a hemorrhaging wound! Rather, I was just bothered that judgmental equality can “sweep a nontrivial transport under the rug”, as I said in that comment.

Having now read your post more carefully, it doesn’t reduce my suspicion of judgmental equalities any. You’re absolutely right, of course, that dependent function composition is not just categorical composition but also involves a pullback. But I don’t think that that really has much to do with the paradox, although it’s certainly a necessary thing to understand before tackling the paradox. The paradox is that we have a function , and a dependent function

, and a dependent function  , such that for any

, such that for any  we have

we have  . Whatever dependent function composition means on the categorical side, the point is that we (naively) expect the element

. Whatever dependent function composition means on the categorical side, the point is that we (naively) expect the element  to have forgotten the identity of

to have forgotten the identity of  , whereas we can actually recover

, whereas we can actually recover  from it by applying the operation

from it by applying the operation  . And the reason that is possible, it seems to me, is because judgmental equality can hide what on the categorical level would be a transport across a nontrivial isomorphism.

. And the reason that is possible, it seems to me, is because judgmental equality can hide what on the categorical level would be a transport across a nontrivial isomorphism.

Your example of is nice to make a connection to, but it took me a few minutes to extract from it a paradox that looks the same as Nicolai’s. Here’s what I came up with: take

is nice to make a connection to, but it took me a few minutes to extract from it a paradox that looks the same as Nicolai’s. Here’s what I came up with: take  and

and  . Then we have

. Then we have  defined by

defined by  , and the codomain of

, and the codomain of  is contractible. But

is contractible. But  , from which we can extract

, from which we can extract  . Did you have something simpler in mind?

. Did you have something simpler in mind?

I’m having difficulty figuring what you mean by “hide” in saying “judgmental equality can hide what on the categorical level would be a transport across a nontrivial isomorphism”, but let that wait; what I think is going on, in both Nikolai’s example and your pr_2 example, is that the proof assistant is doing fewer computations than homotopical thinking would expect: the term you’re giving *does* have type the universal cover of , which universal cover *is* contractIBLE, but neither coq nor agda bother to apply the contraction/extract a normal form in that type (which is actually the prudent thing to do when normal forms are not guaranteed). It just remembers the term itself untill there is a beta reduction to do — and this is sort-of what I meant last time by singing about cofibrations in every comment. The free space of the physical universe we live in seems to be contractible, at least at the level of detail we can discern, but we aren’t surprised that we can remember where things in it are, relative to eachother.

, which universal cover *is* contractIBLE, but neither coq nor agda bother to apply the contraction/extract a normal form in that type (which is actually the prudent thing to do when normal forms are not guaranteed). It just remembers the term itself untill there is a beta reduction to do — and this is sort-of what I meant last time by singing about cofibrations in every comment. The free space of the physical universe we live in seems to be contractible, at least at the level of detail we can discern, but we aren’t surprised that we can remember where things in it are, relative to eachother.

And so, while there doesn’t seem to be a type to say this, the term you’re giving *also* has the form for

for  an appropriate dependent type. Because neither coq (nor agda) actually thinks “that’s in a contractible type, let me contract it”, we can do the violence of pretending that

an appropriate dependent type. Because neither coq (nor agda) actually thinks “that’s in a contractible type, let me contract it”, we can do the violence of pretending that  is a map to

is a map to  , rather than a dependent map.

, rather than a dependent map.

To put it in Nikolai the magician’s terms, “a term of type ” doesn’t actually constitute mere evidence that I’ve chosen a natural number! The only actual terms in

” doesn’t actually constitute mere evidence that I’ve chosen a natural number! The only actual terms in  are of the form

are of the form  , and neither coq nor agda thinks “this is in a contractible type, let me contract it”.

, and neither coq nor agda thinks “this is in a contractible type, let me contract it”.

I wouldn’t focus too much on coq or agda; the paradox is a paradox with the theory, not just some implementation of it. I think I see your point that a homotopy theorist may expect all terms in a contractible type to get “literally” identified somehow, but I don’t think there’s any sense in which such an identification could be regarded as a “computation”. For one thing, whether or not a given type is contractible is not decidable!

As some of us were just discussing in a github issue, a newcomer to type theory does have to get over the expectation that things s/he is used to regarding as “identical” are not judgmentally identical, hence not literally interchangeable in the theory. In other words, the “equality” of mathematics is propositional equality, not judgmental equality. And once you’ve come to terms with that, I don’t think there’s any additional “computation” on terms of a contractible type that could be expected: any two such terms are already (propositionally) equal.

For what I mean by “hiding a nontrivial isomorphism”, see my other comment.

I was originally thinking of . I believe this type is isomorphic to the interval, and yields the same construction as what I did above. But your construction works too.

. I believe this type is isomorphic to the interval, and yields the same construction as what I did above. But your construction works too.

Edit: More precisely, there a map defined by

defined by  and

and  ; we have , I believe,

; we have , I believe,  .

.

Thanks for posting this explanation, a clarification can only help. I am surprised and happy that my construction has triggered so many discussions. Ultimately, however, the paradox is just what paradoxes use to be: a counter-intuitive construction, but not in any sense a “problem” that one has to do something about (maybe I have not made that clear enough in my original post). The core is, to say it in Mike’s words (I cannot think of anything better), that we “can sweep a nontrivial transport under the rug”, but I don’t think that this has any bad consequences. It only says that we have to adjust our intuition to the theory (you argue in that direction if I understand correctly).

Thanks for the pointer to Dan’s implementation, I was not aware of that. I agree with Jason and Jesse that we should try to figure out which judgmental equalities can safely be considered, and I am also thinking about this.

One small side remark:

Martin has observed that the truncated version of bool can be given the same judgmental properties as the interval (see his Agda code), so there is really no actual difference.

The problem is the other way; I do not think the interval can be given the judgmental properties of truncation (unless we have some kind of judgmental eta for the interval, or more commutation rules for pattern matching). In particular, I don’t see how to define for the interval in such a way that applying the interval eliminator to it reduces appropriately.

for the interval in such a way that applying the interval eliminator to it reduces appropriately.

Ok, but then I don’t understand why you need this direction here. I thought you should have used the truncation of bool, but instead, you used the interval and explained why that is justified; and I supported that justification.

I need that direction if I want judgmental factorization of the function, rather than just judgmental factorization on constructors. The purpose of the parenthetical note was to explain why I used the interval rather than ‖Bool‖ (the types and proofs are a bit simpler), and to note that this resulted in slightly weaker judgmental properties (that the judgmental factorization only occurs on constructors (

trueandfalse) and not arbitrary variables).If that didn’t answer your question, I fear we may be talking past each other (or writing incomplete thoughts); I re-read your two sentences three or four times, and came to a different conclusion about what they said and how to respond each time.

Okay, thanks, now I see what you meant. (I just did not understand that you wanted to make everything work for the interval, rather than making it work for ||Bool|| and using the interval as a tool.)

Oh! I think I get now what you Mike are saying with “hiding” a nontrivial isomorphism; the clunkier way I’d phrase it is that we implicitly have a “pair”

(x ; f) : { x : S1 & x = 0 }and another chosen identification_ : x = 0becausexhappens to be litterally0… you may well have said exactly that already. The fact that naive equivalences could be either adjointified or Joyalized (and that these have essentially the same fiber maps) was a case of a similar coincidence.I didn’t ever mean that a proofchecker *should* collapse types we’ve shown or supposed contractible; leaving it alone, I’m sure, is really the right thing. The lesson, if you will, that I’m drawing from this all is that a proofchecker is not a secure chanel for obfuscation.

Hmm, I’m not saying you’re wrong, but I don’t see why that’s what I’m saying. (-:

Actually, on further reflection, maybe “hiding” is the wrong word.

I feel like I have a decent explanation of this paradox within the “literal” interpretation of homotopy type theory (the one where $x = y$ really truly means that $x$ is the same thing as $y$, as opposed to merely denoting a path between two points which may be distinct).

In the literal interpretation, `interval` certainly has only one point. So why isn’t `myst` constant? The answer is that actually, `myst` *is* constant. Sure, `myst (i true)` is `true` and `myst (i false)` is `false`. But on what grounds might we claim that `myst (i x)` is non-constant? In order to assert that `myst (i true)` and `must (i false)` are different, we must already have a proof that their types are the same. We happen to know that `Bool = Bool` trivially, but this prior knowledge is irrelevant! In order to check whether or not `myst (i x)` is non-constant, we need to use the correct equality proof when comparing `must (i true)` and `must (i false)`. In this case, the correct equality proof is the boolean negation equivalence.

I think this makes more sense if we say that `Q one = YesNo`, where `YesNo` is a two-element set containing `yes` and `no`, and `Q seg` is the equivalence mapping `true` to `yes` and `false` to `no`. Now `myst (i true)` is `true` while `myst (i false)` is `yes`. This doesn’t seem so paradoxical, does it? Since `Bool` and `YesNo` are the same thing, a constant function can be `true` at one point and `yes` at another. The original `myst` is recovered if you just replace `yes` with `false` and `no` with `true`.

Maybe I misunderstand, but that sounds like roughly the same explanation I’ve been proposing: there is a nontrivial transport that gets swept under the rug by the fact that the types of Q zero and Q one appear identical. I don’t think it depends on a literal interpretation, though (and I’m not entirely sure what you mean by the “literal interpretation” anyway — I certainly don’t know of a semantics that behaves in that way).

You’re probably right. I should have read the post more thoroughly before commenting.